It's very useful to know which mind is responsible for the content of an essay, a report, a codebase, or any intellectual work product. This is why we have authorship in the first place. It's why the scientific community developed the CRediT (Contributor Roles Taxonomy) system — so that when someone finds fraud in the data analysis of a multi-author paper, you can look at who was actually responsible for the data analysis, rather than blaming the person who only designed the experiments.

For the first time in history, there's a new category of mind available. It's being used, to one degree or another, in a substantial minority of intellectual output — and almost certainly, in the near future, the majority. Now is the time to be developing standards, because there are genuinely new things happening that our existing attribution systems weren't designed for.

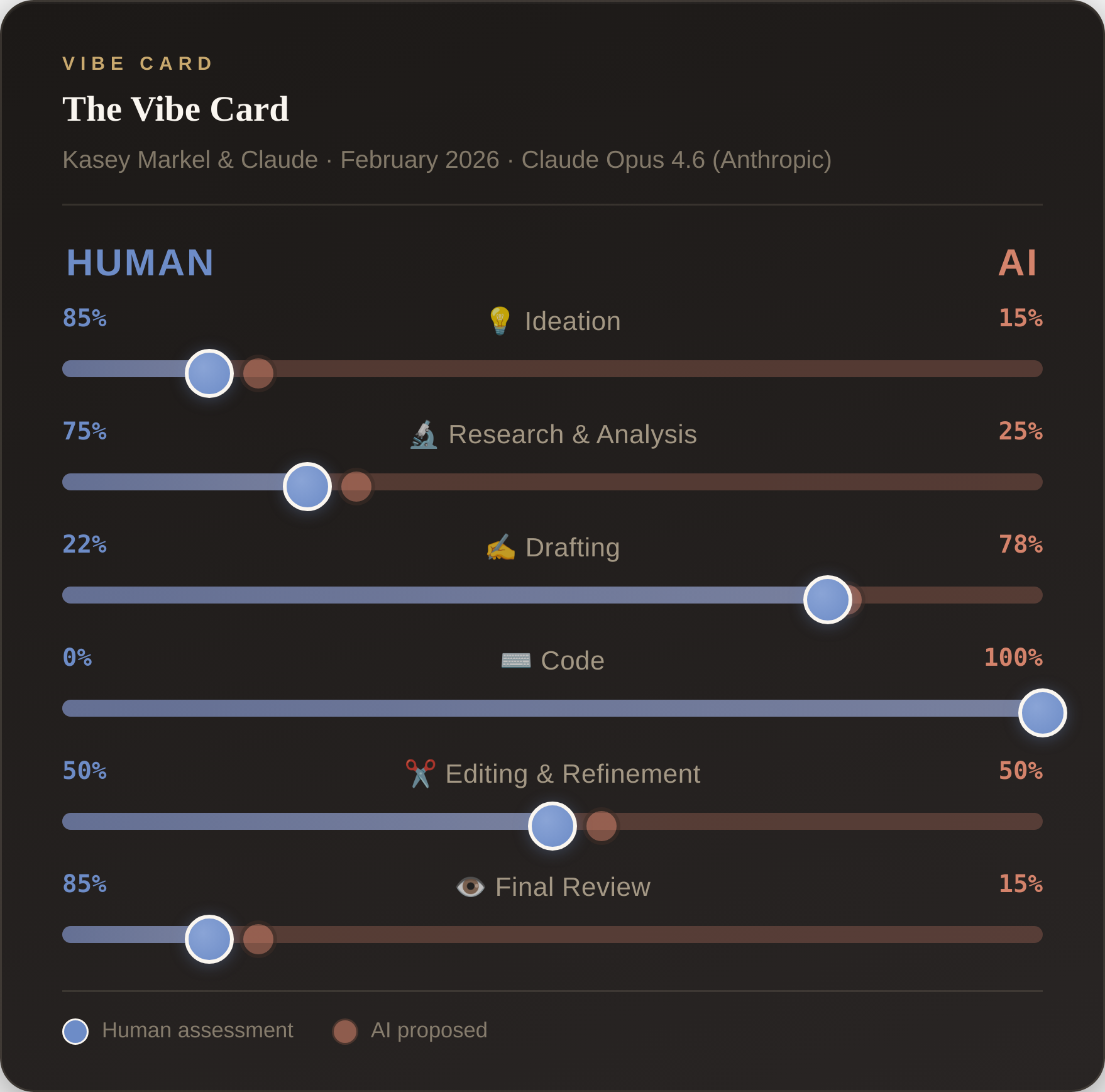

I'm proposing a simple one. I'm calling it the Vibe Card.

What It Is

The Vibe Card is a small visual element that sits at the top of an essay, report, or work product — right under the title, like the epistemic status remarks that rationalist bloggers often include. It contains a set of sliders, each representing a different dimension of contribution, ranging from "Fully Human" on the left to "Fully AI" on the right.

The dimensions are inspired by the CRediT taxonomy used for scientific authorship, adapted for the kinds of intellectual work people actually produce with LLMs:

- Ideation — Who came up with the core ideas and questions?

- Research & Analysis — Who gathered data, ran analyses, found sources?

- Drafting — Who wrote the text?

- Code — Who wrote any code involved in the project? (Include only if applicable.)

- Editing & Refinement — Who reviewed and improved the work?

- Final Review — How thoroughly did a human review the final product?

At the bottom of the card, you note the specific AI model and version used — not just "Claude" but "Claude Opus 4.6" or "GPT-4o" or whatever was actually in the loop. Model capabilities vary enormously, and a Vibe Card from 2024 using GPT-3.5 means something very different from one using a 2026 frontier model.

A quick cautionary note on this: AI models are bad at self-identification. The model that built this very essay (Claude Opus 4.6) initially labeled itself as Opus 4.5 when generating the Vibe Card component. If you're asking an AI to help generate your Vibe Card, you should explicitly prompt it to state its exact model name and version, then verify it yourself. The interactive tool includes a prompt template for this — because if the whole point of the standard is transparency, getting the model name wrong kind of defeats the purpose.

Why It Matters

The first reason is simple honesty. If a colleague sends you a report, it's useful to know whether they spent forty hours writing it or whether they spent twenty minutes talking into their phone on a walk and had Claude build a first draft. The information content is different. The appropriate level of trust is different. The kind of feedback that's useful is different.

The second is accountability. The CRediT taxonomy became valuable in science partly because it clarifies responsibility. If fraud is discovered, you can trace it to the people who actually handled that part of the work. AI can't take responsibility — not yet, at any rate. But a Vibe Card that shows "Analysis: 90% AI" tells you that if there are errors in the analysis, a human didn't carefully check every step. That's important information.

Related: consider the "Final Review" slider. If someone sends you an essay where Drafting is 90% AI and Final Review is also way over on the AI side, that's telling you something important — probably that the human barely read their own work product before shipping it. That's useful context for deciding how much weight to give it.

The third is just that it's the right thing to do for the intellectual community. We're in a transition period where norms around AI use are still forming. Being transparent about it — ahead of any requirement to be — builds trust and sets a good precedent.

And the fourth, honestly, is that it's kind of fun. There's something satisfying about being explicit about your process.

How It Works in Practice

The Vibe Card is interactive. The workflow goes like this:

- You ask your AI to propose initial slider values for each dimension, based on the work it actually did.

- The AI generates a card with its proposed values.

- You drag the sliders to where you think they should actually be.

- You hit "Save as PNG" and get a transparent-background image to embed at the top of your essay or report.

An optional feature: the card can display both the AI's proposed values and the human's final values as two separate dots on each slider — the AI's proposal as a faded amber dot, the human's final call as a solid green one. The gap between them is itself interesting data. It tells you something about how the human and AI perceive their relative contributions, and whether the human thought the AI was overclaiming or underclaiming its role.

For this essay, for example, Claude proposed that Drafting was about 80% AI. Looking at it, I'd say that's roughly right — the ideas and structure came from me talking on a walk, but the actual prose is mostly Claude's. I might nudge the "Editing" slider a bit, but the overall picture is honest. The final card is mine to approve.

The Code

The Vibe Card is designed to be a reusable, open standard. The code for generating these cards — including the interactive slider component, the dual-dot AI/human comparison, and the PNG export — is publicly available on my GitHub. Anyone who wants to adopt the standard can grab the code, drop it into their own site, and generate Vibe Cards for their own work. The whole process takes about thirty seconds.

Adopt It

You don't have to use my exact categories. You don't have to use my exact visual design. But I'd encourage anyone publishing intellectual work that involves AI to put something at the top that says how much of this was you and how much was the machine. The norms we set now will matter as AI contribution becomes the default rather than the exception.

Ultimately, any human who signs their name at the top of a work product is taking responsibility for everything in it. That hasn't changed. But a Vibe Card helps your readers — and your future self — understand how that responsibility was distributed in practice.